部署zookeeper(三节点)

apiVersion: v1

kind: Service

metadata:

name: zookeeper-headless

namespace: kafka

labels:

app: zookeeper

spec:

ports:

- port: 2181

name: client

- port: 2888

name: fllower

- port: 3888

name: election

clusterIP: None

selector:

app: zookeeper

---

apiVersion: v1

kind: Service

metadata:

name: zookeeper-adminserver

namespace: kafka

labels:

app: zookeeper

spec:

type: NodePort

ports:

- port: 8080

name: adminserver

nodePort: 31801

selector:

app: zookeeper

---

apiVersion: v1

kind: ConfigMap

metadata:

name: zookeeper-config

namespace: kafka

labels:

app: zookeeper

data:

zoo.cfg: |+

tickTime=2000

initLimit=10

syncLimit=5

dataDir=/data

dataLogDir=/datalog

clientPort=2181

server.1=zookeeper-0.zookeeper-headless.kafka.svc.cluster.local:2888:3888

server.2=zookeeper-1.zookeeper-headless.kafka.svc.cluster.local:2888:3888

server.3=zookeeper-2.zookeeper-headless.kafka.svc.cluster.local:2888:3888

4lw.commands.whitelist=*

---

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: zookeeper

namespace: kafka

spec:

serviceName: "zookeeper-headless"

replicas: 3

selector:

matchLabels:

app: zookeeper

template:

metadata:

labels:

app: zookeeper

spec:

initContainers:

- name: set-id

# 源镜像地址:busybox:latest

image: harbor.basepoint.net/library/busybox:latest

command: ['sh', '-c', "hostname | cut -d '-' -f 2 | awk '{print $0 + 1}' > /data/myid"]

volumeMounts:

- name: data

mountPath: /data

containers:

- name: zookeeper

# 源镜像地址:zookeeper:3.9

image: harbor.basepoint.net/library/zookeeper:3.9

imagePullPolicy: IfNotPresent

resources:

requests:

memory: "500Mi"

cpu: "500m"

limits:

memory: "1000Mi"

cpu: "1000m"

ports:

- containerPort: 2181

name: client

- containerPort: 2888

name: fllower

- containerPort: 3888

name: election

volumeMounts:

- name: zook-config

mountPath: /conf/zoo.cfg

subPath: zoo.cfg

- name: data

mountPath: /data

env:

- name: MY_POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

volumes:

- name: zook-config

configMap:

name: zookeeper-config

volumeClaimTemplates:

- metadata:

name: data

spec:

accessModes: ["ReadWriteMany"]

storageClassName: longhorn

resources:

requests:

storage: 10Gi部署Kafka(三节点)

apiVersion: v1

kind: Service

metadata:

name: kafka-headless

namespace: kafka

labels:

app: kafka

spec:

ports:

- port: 9092

name: server

clusterIP: None

selector:

app: kafka

---

apiVersion: v1

kind: Service

metadata:

name: kafka

namespace: kafka

spec:

type: NodePort

selector:

app: kafka

ports:

- protocol: TCP

port: 9092

targetPort: 9092

nodePort: 30092

name: bootstrap

---

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: kafka

namespace: kafka

spec:

serviceName: "kafka-headless"

replicas: 3

selector:

matchLabels:

app: kafka

template:

metadata:

labels:

app: kafka

spec:

affinity:

podAntiAffinity:

preferredDuringSchedulingIgnoredDuringExecution:

- weight: 1

podAffinityTerm:

labelSelector:

matchExpressions:

- key: "app"

operator: In

values:

- kafka

topologyKey: "kubernetes.io/hostname"

securityContext:

runAsUser: 0

containers:

- name: kafka

# 源镜像地址:apache/kafka:3.7.0

image: harbor.basepoint.net/library/kafka:3.7.0

imagePullPolicy: IfNotPresent

resources:

requests:

memory: "500Mi"

cpu: "500m"

limits:

memory: "1000Mi"

cpu: "2000m"

ports:

- containerPort: 9092

name: server

#env:

# KAFKA_ADVERTISED_LISTENERS: PLAINTEXT://172.25.2.4:30092

# --override advertised.listeners=PLAINTEXT://172.25.2.4:30092 \

command:

- sh

- -c

- "exec /opt/kafka/bin/kafka-server-start.sh /opt/kafka/config/server.properties --override broker.id=${HOSTNAME##*-} \

--override listeners=PLAINTEXT://:9092 \

--override zookeeper.connect=zookeeper-0.zookeeper-headless:2181,zookeeper-1.zookeeper-headless:2181,zookeeper-2.zookeeper-headless:2181 \

--override log.dirs=/var/lib/kafka/data/logs \

--override auto.create.topics.enable=true \

--override auto.leader.rebalance.enable=true \

--override background.threads=10 \

--override compression.type=producer \

--override delete.topic.enable=true \

--override leader.imbalance.check.interval.seconds=300 \

--override leader.imbalance.per.broker.percentage=10 \

--override log.flush.interval.messages=9223372036854775807 \

--override log.flush.offset.checkpoint.interval.ms=60000 \

--override log.flush.scheduler.interval.ms=9223372036854775807 \

--override log.retention.bytes=-1 \

--override log.retention.hours=168 \

--override log.roll.hours=168 \

--override log.roll.jitter.hours=0 \

--override log.segment.bytes=1073741824 \

--override log.segment.delete.delay.ms=60000 \

--override message.max.bytes=1000012 \

--override min.insync.replicas=1 \

--override num.io.threads=8 \

--override num.network.threads=3 \

--override num.recovery.threads.per.data.dir=1 \

--override num.replica.fetchers=1 \

--override offset.metadata.max.bytes=4096 \

--override offsets.commit.required.acks=-1 \

--override offsets.commit.timeout.ms=5000 \

--override offsets.load.buffer.size=5242880 \

--override offsets.retention.check.interval.ms=600000 \

--override offsets.retention.minutes=1440 \

--override offsets.topic.compression.codec=0 \

--override offsets.topic.num.partitions=50 \

--override offsets.topic.replication.factor=3 \

--override offsets.topic.segment.bytes=104857600 \

--override queued.max.requests=500 \

--override quota.consumer.default=9223372036854775807 \

--override quota.producer.default=9223372036854775807 \

--override replica.fetch.min.bytes=1 \

--override replica.fetch.wait.max.ms=500 \

--override replica.high.watermark.checkpoint.interval.ms=5000 \

--override replica.lag.time.max.ms=10000 \

--override replica.socket.receive.buffer.bytes=65536 \

--override replica.socket.timeout.ms=30000 \

--override request.timeout.ms=30000 \

--override socket.receive.buffer.bytes=102400 \

--override socket.request.max.bytes=104857600 \

--override socket.send.buffer.bytes=102400 \

--override unclean.leader.election.enable=true \

--override zookeeper.session.timeout.ms=6000 \

--override zookeeper.set.acl=false \

--override broker.id.generation.enable=true \

--override connections.max.idle.ms=600000 \

--override controlled.shutdown.enable=true \

--override controlled.shutdown.max.retries=3 \

--override controlled.shutdown.retry.backoff.ms=5000 \

--override controller.socket.timeout.ms=30000 \

--override default.replication.factor=1 \

--override fetch.purgatory.purge.interval.requests=1000 \

--override group.max.session.timeout.ms=300000 \

--override group.min.session.timeout.ms=6000 \

--override log.cleaner.backoff.ms=15000 \

--override log.cleaner.dedupe.buffer.size=134217728 \

--override log.cleaner.delete.retention.ms=86400000 \

--override log.cleaner.enable=true \

--override log.cleaner.io.buffer.load.factor=0.9 \

--override log.cleaner.io.buffer.size=524288 \

--override log.cleaner.io.max.bytes.per.second=1.7976931348623157E308 \

--override log.cleaner.min.cleanable.ratio=0.5 \

--override log.cleaner.min.compaction.lag.ms=0 \

--override log.cleaner.threads=1 \

--override log.cleanup.policy=delete \

--override log.index.interval.bytes=4096 \

--override log.index.size.max.bytes=10485760 \

--override log.message.timestamp.difference.max.ms=9223372036854775807 \

--override log.message.timestamp.type=CreateTime \

--override log.preallocate=false \

--override log.retention.check.interval.ms=300000 \

--override max.connections.per.ip=2147483647 \

--override num.partitions=1 \

--override producer.purgatory.purge.interval.requests=1000 \

--override replica.fetch.backoff.ms=1000 \

--override replica.fetch.max.bytes=1048576 \

--override replica.fetch.response.max.bytes=10485760 \

--override reserved.broker.max.id=1000"

volumeMounts:

- name: data

mountPath: /var/lib/kafka/data

env:

- name: MY_POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: ALLOW_PLAINTEXT_LISTENER

value: "yes"

- name: KAFKA_HEAP_OPTS

value : "-Xms1g -Xmx1g"

- name: KAFKA_OPTS

value: "-Dlogging.level=INFO"

- name: JMX_PORT

value: "5555"

volumeClaimTemplates:

- metadata:

name: data

spec:

accessModes: ["ReadWriteOnce"]

storageClassName: longhorn

resources:

requests:

storage: 10Gi检查所有pod启动无报错

[root@k8s-h3c-master01 kafka]# kubectl get pod -n kafka

NAME READY STATUS RESTARTS AGE

kafka-0 1/1 Running 0 1m

kafka-1 1/1 Running 0 1m

kafka-2 1/1 Running 0 1m

zookeeper-0 1/1 Running 0 7m

zookeeper-1 1/1 Running 0 7m

zookeeper-2 1/1 Running 0 7m部署Kafka-eagle可视化

apiVersion: apps/v1

kind: Deployment

metadata:

name: kafka-eagle

namespace: kafka

spec:

replicas: 1

selector:

matchLabels:

app: kafka-eagle

template:

metadata:

labels:

app: kafka-eagle

spec:

restartPolicy: Always

containers:

# 源镜像地址:nickzurich/efak:3.0.1

- image: harbor.basepoint.net/library/efak:3.0.1

imagePullPolicy: IfNotPresent

name: kafka-eagle

env:

- name: EFAK_CLUSTER_ZK_LIST

value: zookeeper-0.zookeeper-headless.kafka.svc.cluster.local:2181,zookeeper-1.zookeeper-headless.kafka.svc.cluster.local:2181,zookeeper-2.zookeeper-headless.kafka.svc.cluster.local:2181

ports:

- containerPort: 8048

name: web

---

apiVersion: v1

kind: Service

metadata:

name: kafka-eagle

namespace: kafka

spec:

type: NodePort

ports:

- port: 8048

targetPort: 8048

nodePort: 30048

name: web

selector:

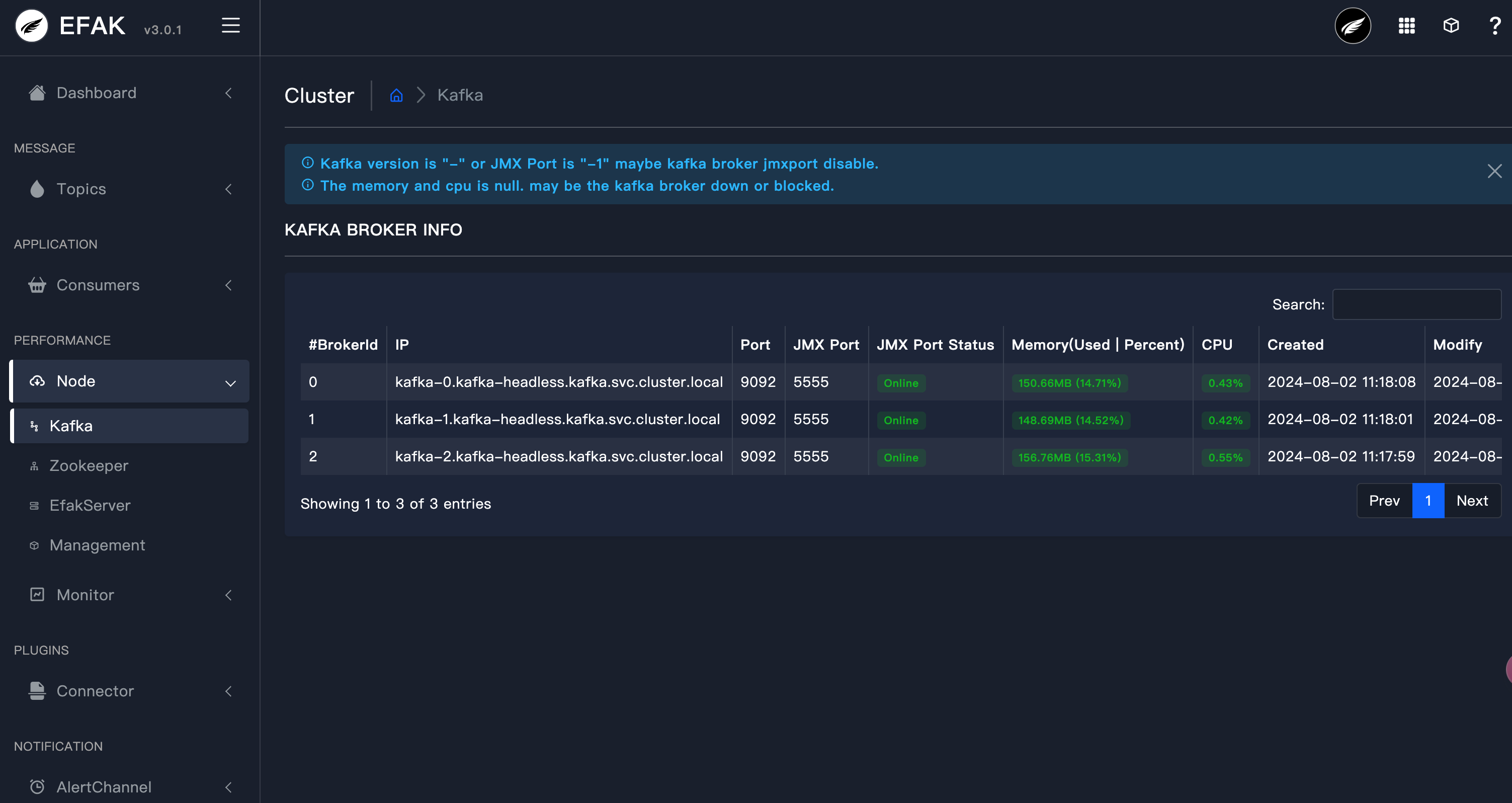

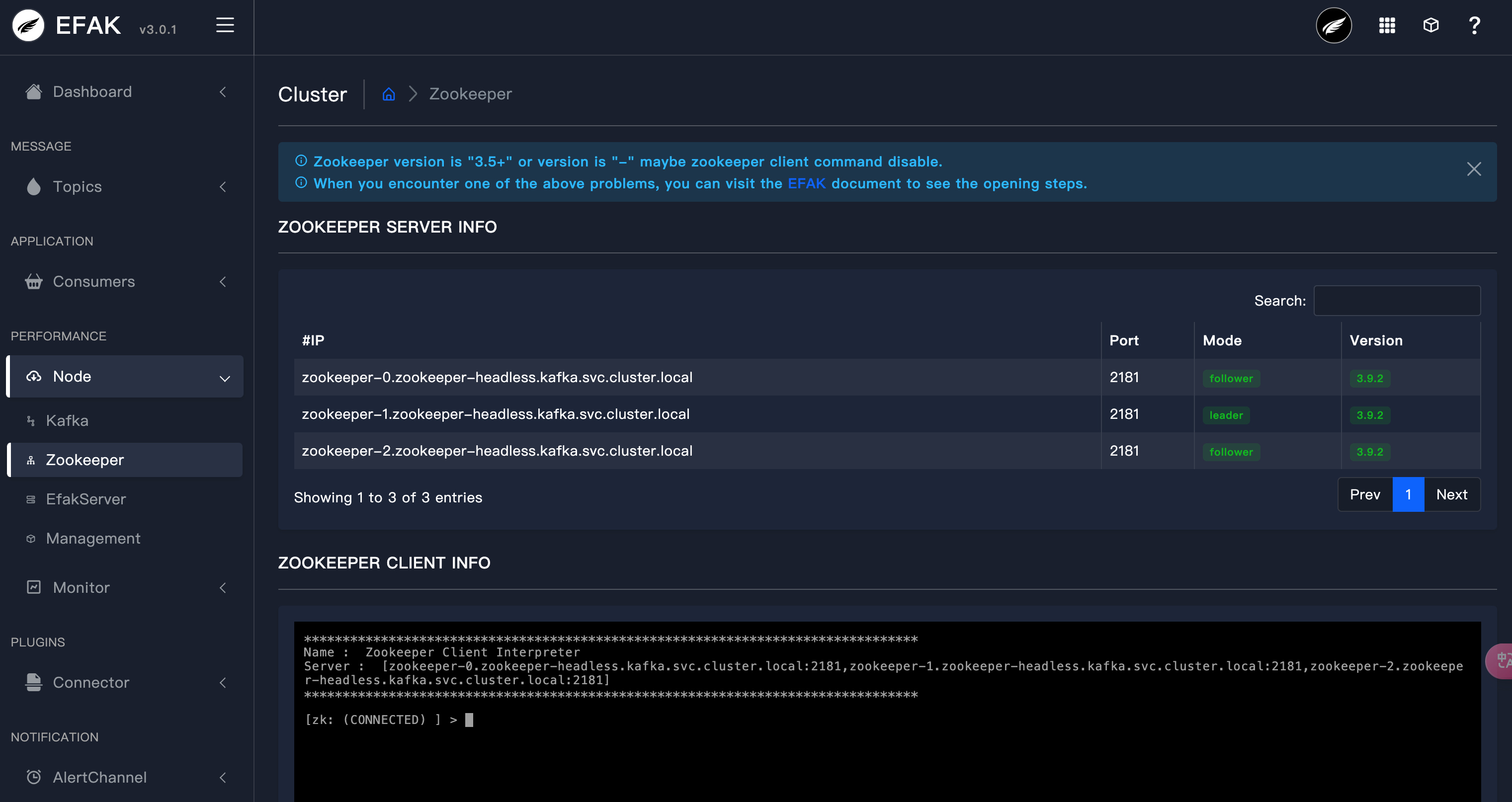

app: kafka-eagle验证

登录eagle,http://ip:30048

账户admin,密码123456